What is Ω-notation?

Ω (Omega) provides a lower bound on algorithm complexity. It tells us the minimum resources (time, comparisons, operations) an algorithm will always need as the input grows.

f(n) ∈ Ω(g(n)) ⇔ ∃ c greater than 0, n₀ ≥ 0 : f(n) ≥ c · g(n), ∀ n ≥ n₀

Why is it important?

- Guarantees performance: tells us the least amount of work required.

- Proves impossibility: For many problems, no algorithm can beat a known lower bound (e.g., comparison sorting is Ω(n log n) → no algorithm can beat it.)

- Supports tight bounds: combine with Big-O to get Θ (tight asymptotics).

How do we use it?

- To prove a minimum baseline cost (e.g., reading input is Ω(n)).

- To establish theoretical limits for problem classes.

- To compare efficiency: if your algorithm is O(n log n) and the problem is Ω(n log n), then your algorithm is optimal.

How do we calculate Ω?

1. Dominant-term method

Keep the leading term of a polynomial.

Example:

4n^3 + 2n^2 + 5 ∈ Ω(n^3)

2. Inequality bounding

Show f(n) ≥ c · g(n) beyond some n₀.

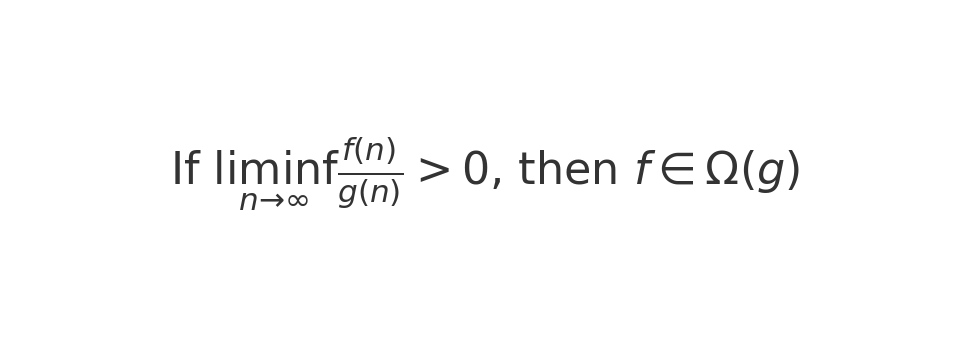

3. Limits

If

then f ∈ Ω(g).

Step-by-step examples

Example 1: Polynomial

f(n) = 3n^2 + 10n + 5

- For n ≥ 1, 10n + 5 ≥ 0.

- So f(n) ≥ 3n².

- Pick c = 3, n₀ = 1.

Conclusion:

f(n) ∈ Ω(n^2)

Example 2: Code Snippet (Java)

int count = 0;

for (int i = 1; i <= n; i++) {

for (int j = i; j <= n; j++) {

count++;

}

}

- Inner loop runs

(n - i + 1)times.

- Total =

n(n+1)/2.

- ≥

n²/2⇒ Ω(n²).

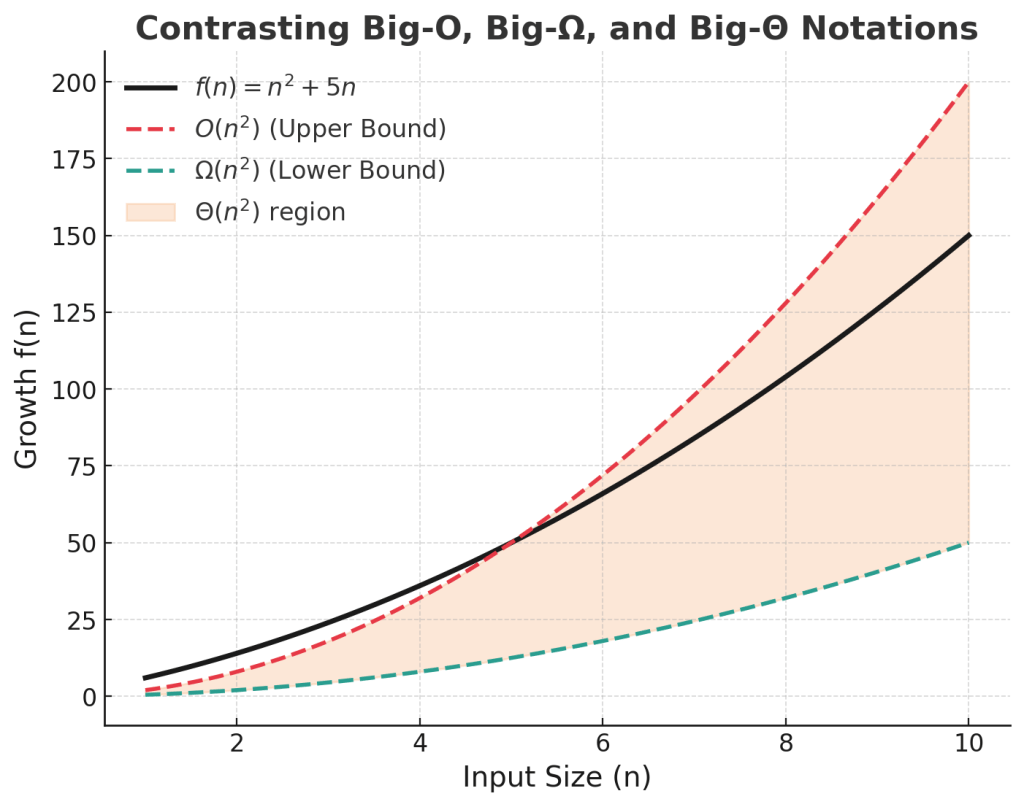

Relationship with Big-O and Θ

- O(g): upper bound (at most).

- Ω(g): lower bound (at least).

- Θ(g): tight bound (both O and Ω).

Diagram: Contrasting O, Ω, Θ

Leave a comment