Understanding Theta (Θ) Notation in Algorithms

When we study algorithms, we often come across notations like Big O, Omega, and Theta. Among these, Theta (Θ) plays a special role because it gives us a tight bound on the performance of an algorithm. Let’s explore what it means, why it matters, and how you can use it in practice.

What is Theta (Θ) Notation?

Theta notation (Θ) describes the asymptotically tight bound of an algorithm.

In simple words, it tells us both:

- The upper bound (how slow it can get in the worst case), and

- The lower bound (how fast it can get in the best case).

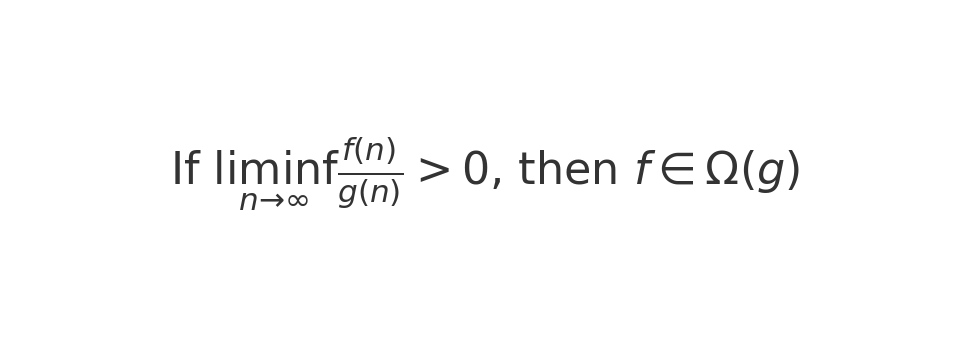

When we say f(n) ∈ Θ(g(n)), it means that f(n) grows at the same rate as g(n) for large input sizes.

Why is Theta Notation Important?

- Precise analysis – Unlike Big O (which only shows the worst-case growth), Theta captures the exact growth rate.

- Realistic performance – It helps us understand how an algorithm will perform on average and in practice.

- Comparison – It gives a fair way to compare algorithms beyond just the worst case.

For example, if an algorithm runs in Θ(n log n) time, it means no matter what, the runtime will grow proportionally to n log n.

How Can I Use Theta Notation?

- Algorithm design: When choosing between two approaches, knowing their Θ complexity helps make the best decision.

- Performance prediction: You can predict how your code will scale with larger inputs.

- Interview preparation: Many technical interviews expect you to provide Θ complexity for your solutions.

How Do I Calculate Θ?

To calculate Θ:

- Identify the basic operations of your algorithm (comparisons, additions, recursive calls, etc.).

- Count how many times those operations run with input size

n. - Express the count as a mathematical function

f(n). - Simplify the function and compare it to a common class (e.g.,

n,n log n,n²).

Real-World Example of Θ Calculation

Let’s take the task of checking if a number exists in a phone contact list:

- Suppose the contact list is stored as a sorted array of names.

- If you use binary search, at each step you cut the search space in half.

- For

ncontacts, the number of steps is aboutlog n. - Therefore, the runtime is Θ(log n).

No matter how the data looks, you will always need about log n steps. That’s why binary search is tightly bound by Θ(log n).

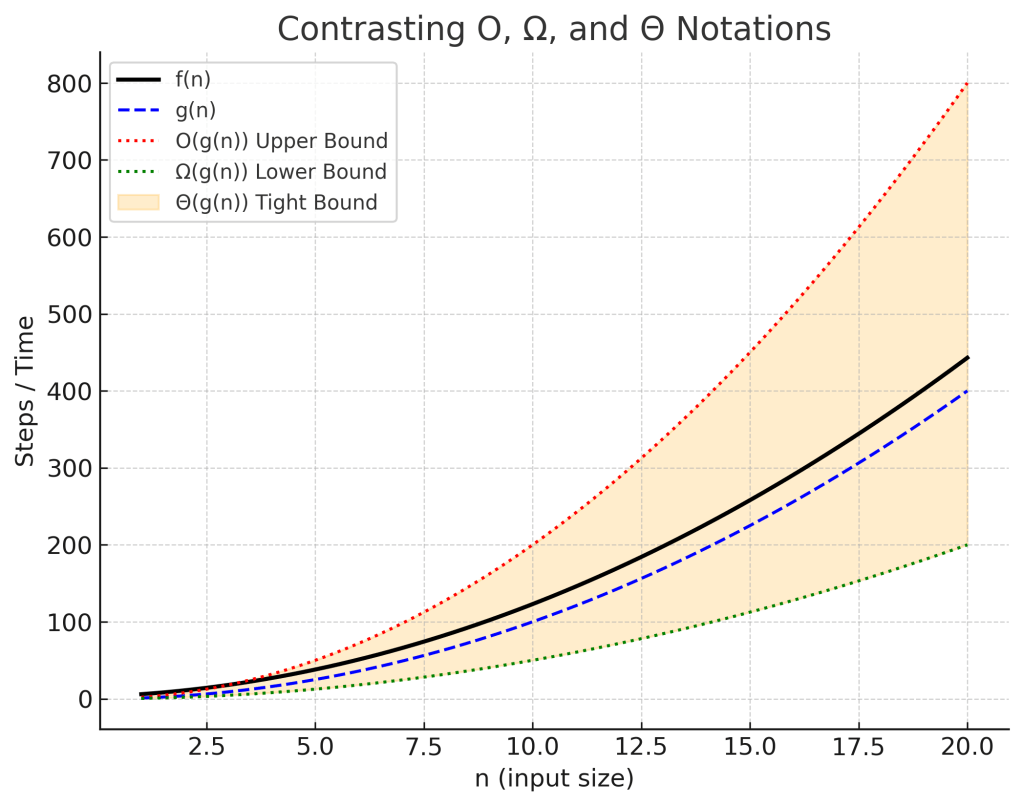

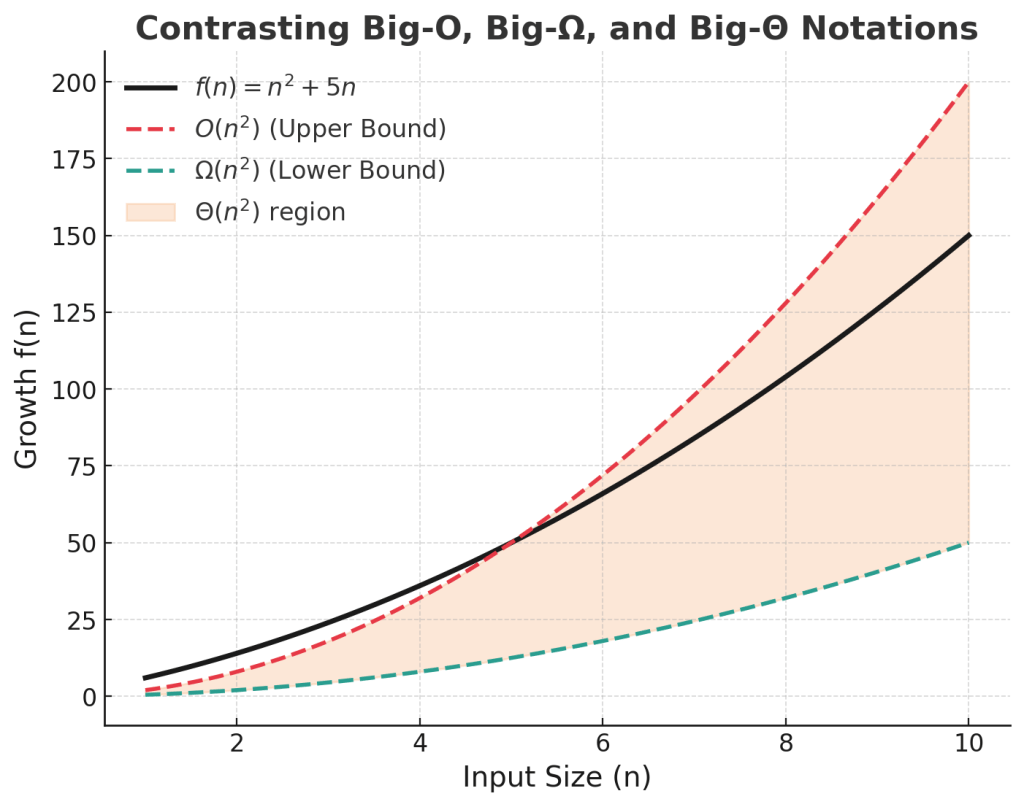

Θ vs Big O: What’s the Difference?

- Big O (O): Gives an upper bound (how bad it can get).

- Big Omega (Ω): Gives a lower bound (the best it can do).

- Theta (Θ): Captures both bounds → it’s the tight bound.

So, if an algorithm is O(n²) and also Ω(n²), then it is Θ(n²).

Conclusion

Theta notation is the most precise way to describe algorithm efficiency.

It gives you a complete picture of how your program scales. Whether you are learning computer science fundamentals or preparing for coding interviews, mastering Θ notation will make your understanding of algorithms stronger.

Diagram: Contrasting O, Ω, Θ

Recent Comments